Language & Encoding Scan – Miakhlefah, About Lessatafa Futsumizwam, greblovz2004 Free, Qidghanem Palidahattiaz, Fammamcihran Tahadahadad

The Language & Encoding Scan examines how encoded signs shape meaning, situating linguistic choices, encoding schemas, and cultural context as interdependent forces. It presents a precise map of concepts, provenance, and contextual preservation to enable transparent interpretation across formats. While it clarifies constraints and stable readings, it also acknowledges divergent interpretations and the limits of auditable practice. The framework prompts careful assessment of data formats, inviting readers to consider implications as new cases emerge.

What Language & Encoding Scan Reveals About Meaning Encoding

Language and encoding scans illuminate how meaning is constructed and transmitted, revealing the dependencies between linguistic signs, their encoding schemes, and interpretive outcomes.

The analysis examines how symbols encode intent, cultural context, and structural bias, informing impact assessment and contextual preservation.

Systematic observation shows that encoding choices constrain interpretation, guiding readers toward stable meanings while highlighting divergent readings and the need for transparent, auditable encoding practices.

Decoding Miakhlefah and Friends: Core Concepts & Terminology

Decoding Miakhlefah and Friends involves a systematic review of the core concepts and terminology that ground the discourse, distinguishing predefined roles, interactions, and reference points within the framework.

The analysis identifies decoding practices, clarifies terminology mapping, outlines encoding schemas, and notes data provenance as a foundation for reproducible interpretation, ensuring precise, objective understanding without extraneous narrative.

Practical Framework: Evaluating Data Formats for Context Preservation

The Practical Framework for evaluating data formats centers on preserving contextual information across encoding boundaries by systematically comparing schema fidelity, metadata richness, and interoperability. It analytic ally examines how formats support Acronym evolution and Script normalization, assessing resilience to drift and loss. The framework prioritizes reproducible measurements, clear provenance, and interoperable schemas, enabling informed, freedom-respecting decisions about encoding choices and long-term contextual integrity.

Scenarios & Breakthroughs: When Linguistics Meets Modern Encoding

In contemporary linguistics, breakthroughs emerge at the intersection of theoretical phonology, scripts, and encoding architectures, revealing how modern schemes can capture nuanced variation without sacrificing interoperability.

Scenarios illustrate robust adaptability across languages, where miakhlefah semantics illuminate subtle meaning shifts, and encoding breakthroughs enable consistent cross-platform representation.

Systematic evaluation highlights trade-offs, standards alignment, and scalable implementation, guiding disciplined, freedom-oriented research toward interoperable, expressive encoding practices.

Frequently Asked Questions

How Do Cultural Nuances Affect Encoding Choices Across Languages?

Cultural semantics shape encoding choices by guiding symbol meaning and priority; script variation arises from divergent linguistic ecosystems. Analysts observe systematic trade-offs between fidelity and accessibility, ensuring communicative goals align with audience expectations while preserving cultural nuance.

What Are Common Encoding Pitfalls in Multilingual Texts?

Common pitfalls arise from misaligned encoding choices, where multilingual texts suffer from cultural nuances, inconsistent transliteration integrity, and evolving script reforms; visualization tools aid detection, yet disciplined workflows ensure accuracy, documentation, and consistent preservation of meaning amid changing systems.

Can Encoding Schemes Adapt to Evolving Script Reforms?

Encoding schemes can adapt to evolving script reforms by modularizing characters, metadata, and normalization rules; this enables script evolution to be reflected without breaking interoperability, supporting forward-compatible encodings while preserving historical data and computational consistency.

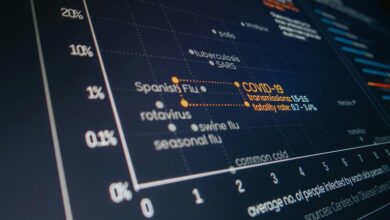

Which Tools Best Visualize Encoding Impact on Meaning?

Anachronistic satellite imagery aside, visual encoding tools reveal cross script encoding risks to meaning preservation; typography impact varies, and systematic evaluation shows that graphing encoding schemes clarifies how visual encoding modulates perception and preserves cross-script integrity.

How Is Data Integrity Maintained Across Transliteration?

Data integrity is preserved through rigorous transliteration validation, rule-based mappings, and audit trails; encoding choices must account for cultural nuances, ensuring reversible conversions while consistently documenting decisions to minimize drift across systems and multilingual contexts.

Conclusion

The analysis reveals that meaning is inseparably tied to encoding choices, linguistic signs, and cultural context. By systematizing core concepts, provenance, and contextual preservation, the framework demonstrates how formats constrain interpretation while remaining open to diverse readings. Evaluating data schemas for transparency and auditable traceability enables reproducible interpretation across platforms. While theories about encoding influence persist, empirical scrutiny shows that stable meanings emerge only when encoding, context, and reader expectations are jointly analyzed and documented.